Your Specialized Practice Deserves Specialized AI

We get the appeal of building your own AI workflows with a foundational model, such as Claude. It’s capable, it’s fast, and it feels like you’re getting ahead of the curve.

We ran tests ourselves; EvenUp vs Claude. The results were instructive. It’s an impressive tool. Claude and other leading foundation models are incorporated into our product and used extensively for development.

But the point remains, Claude alone is not built for your firm. Personal injury law is complex, document-heavy, and ethically unforgiving. It demands more.

Here are five reasons EvenUp wins where Claude and other foundation models fall short.

- You’re underestimating the monetary and technical costs of DIY AI.

- Claude isn’t designed for complex and voluminous case files. EvenUp is.

- Domain expertise is fundamental to everything EvenUp does.

- Claude Starts from Scratch. EvenUp Starts from Your Standards.

- Disbarred for Using the Wrong AI Tool?

1. You’re Vastly Underestimating the True Costs of DIY AI

Building and running your own personal injury AI is more expensive than you think. Most firms don’t realize this until they’re already paying for it.

The cost problem starts with the AI itself. As case volume grows, you’ll face hard decisions most firms never anticipate: determining which AI tasks require the most powerful, expensive models and which tasks can get by using cheaper ones.

Managing this balance between tasks and model complexity is critical for controlling costs, especially at scale, without sacrificing quality. Each wrong decision compounds costs. Most firms don’t see this cliff coming until they’re already over it.

And that’s before you account for the engineering cost of building the thing in the first place. A prototype is impressive in a demo. A product has to scale across hundreds of cases, multiple users, and evolving workflows. It has to work reliably, every day, without breaking. There are engineering tasks you simply cannot vibe code your way out of. Your firm likely doesn’t have that expertise on staff. Hiring and developing it runs directly counter to the goal of increasing capacity while curbing headcount.

Then there’s the adoption problem. If your team already struggles to maintain knowledge bases, follow training protocols, and apply internal templates consistently, a custom AI infrastructure will be 100x harder to enforce. The tool only works if everyone uses it correctly. In practice, they won’t.

EvenUp solves all of this:

- We’ve built cost-optimization layers across our stack, so you get high-quality output at a predictable price point.

- We manage the entire backend, from infrastructure, outputs, and ongoing development, so scaling never becomes your problem.

- We partner with your team on adoption, change management, and getting real return from the platform.

2. Claude Isn’t Designed for Case Files. EvenUp Is Powered By Them.

Personal injury cases are built on large, messy, unstructured document sets. Medical records with inconsistent formatting. Handwritten notes. Faxed pages. Hundreds of files across dozens of providers.

General-purpose tools like Claude struggle with this volume and complexity. They rely on chunking strategies that break continuity across complex case files. The larger and messier the case, the worse the problem gets. This isn’t a limitation that better prompting fixes. It’s a structural one.

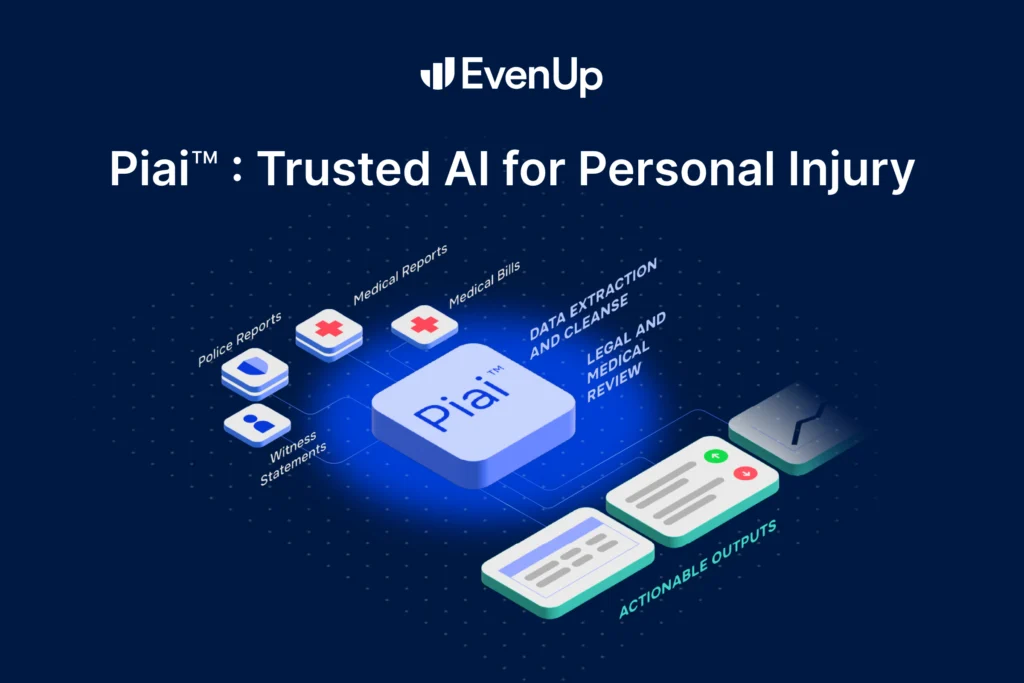

EvenUp was built specifically to manage and move case files while maximizing their value at every stage. Our system, Piai™, is the industry’s most advanced system of personal injury AI models, specialized at every level of the stack: purpose-built infrastructure, UI/UX designed for PI workflows, and specialized models including purpose-built agents assigned to specific tasks from extraction and reconciliation to interpretation and application.

Piai™ operates across two distinct layers: a Reading Layer and a Writing Layer, with specialized agents working between them.

The Reading Layer is where the hardest work happens. Calling it a “reading” layer undersells what it actually does. It processes your raw documents directly, not extracted summaries, and then:

- Understands context across your entire case file, recognizing the same provider under different names, dates that shift in significance, and treatment patterns that matter to case value

- Links information together, connecting providers to dates of service, charges, medical history, and billing codes across every document

- Tracks where everything came from, so any output can be traced back to its source document in seconds

The Writing Layer uses that structured data to generate documents, analysis, and answers, constrained entirely by what the Reading Layer found in your files, not by general world knowledge. EvenUp isn’t drawing on the open internet. It’s drawing on your case, with line-level citations throughout.

The work happening between these layers is particularly challenging and requires significant bespoke engineering to get right. It’s also where general-purpose AI consistently falls short.

3. Data and Domain Expertise that Can’t Be Prompted

Piai™ is only as good as what it’s trained on. This is where the gap between EvenUp and general-purpose AI becomes impossible to close with a prompt.

General-purpose models train on broad, publicly available internet data, often labeled by outsourced workers with no expertise in personal injury law.

EvenUp’s models are fine-tuned on the industry’s largest and fastest-growing PI dataset, built from years of generating winning demands and medical chronologies across hundreds of thousands of real cases.

Our feedback loop compounds this advantage every day. EvenUp employs hundreds of current and past attorneys, firm operators, and other legal experts. They provide real human input into everything our platform generates. Every correction makes the next output sharper.

General AI starts fresh with every prompt. EvenUp gets better with every case.

4. Claude Starts from Scratch. EvenUp Starts from Your Standards.

Every PI firm has a voice. A preferred structure for demands. A way attorneys phrase liability arguments. Formatting standards that reflect years of institutional refinement.

Claude doesn’t automatically retain your firm’s standards. Replicating your voice, structure, and formatting requires deliberate technical setup. This includes system prompts, project instructions, and pasted examples. Someone on your team has to build this from scratch, maintain it as voice and preferences evolve, and redistribute every time something changes.

Then your team has to consistently use the right prompts every time they open a new document. In practice, they won’t.

EvenUp provides your firm greater consistency in tone, prose, and document structure. Our AI Drafts features, such as Mirror Mode, are meant to reduce rewrites and edits that eliminate all the efficiency out of general AI processes.

Going beyond voice consistency, EvenUp provides clear visibility into how every draft is constructed by listing the inputs, assumptions, and contextual signals that guide our AI output. And given our domain expertise, we’ve embedded personal injury best practices into every output.

Our output isn’t a starting point that your team has to rebuild. It’s a real draft that already sounds like you and is sharpened to win.

I don’t think these risks get nearly enough attention. Uploading client records to a general-purpose AI tool isn’t just a security risk; it’s also a disciplinary one.

The ABA’s Formal Opinion 512, from July 2024, makes clear that under Model Rule 1.1, attorneys must understand the limitations of any AI tool they use, with hallucination being paramount. Under Model Rule 3.1, lawyers are further obligated to ensure that AI-generated hallucinations don’t form the basis of claims or filings.

State guidance is similar, such as California’s SB 574, which explicitly states that attorneys’ duties of confidentiality, competence, and nondiscrimination apply to the use of generative AI.

General-purpose AI tools weren’t built to meet these obligations. They’re prone to hallucinations that can corrupt demands, undermine credibility with opposing counsel, and result in court sanctions.

While no AI is perfect, EvenUp is purpose-built to minimize these risks, unlike general models. EvenUp is built with trust and transparency in mind. We know that a hallucination may creep in here and there. That’s why we’ve embedded line-level citations into our casework so that you and your team can see exactly where every case fact is being pulled from.

Accuracy and compliance aren’t separate concerns at EvenUp. They’re built from the same foundation. We hold a SOC 2 Type 2 certification and are HIPAA-compliant. With EvenUp, client data stays isolated within your case files. It functions like a vault, not an open network.

Firms winning with AI aren’t just using better technology. They’re working with partners who understand their practice, help them get up and running, and stay invested in their outcomes over time. That’s a meaningful distinction.

General-purpose AI gives you a capable starting point and then leaves you to figure out the rest. Complexities such as implementation and process overhauls fall to your firm.

EvenUp is designed to be intuitive for the paralegals, case managers, and attorneys who use it every day. We handle onboarding, provide hands-on training, and offer real human support from people who understand PI law, not a generic help desk.

And the results speak for themselves. Take John K Zaid and Associates as an example:

- 30% more demands: The firm has mastered EvenUp’s proactive demand automation, reducing time-intensive reviews by automatically surfacing key case details and medical records.

- 37% of cases flagged early for updates: Proactively surfaced treatment issues requiring follow-up in 37% of client conversations and flagged missed appointments in 20% of cases.

- Consistently higher settlements: The firm is using EvenUp to consistently strengthen negotiations, recently achieving $30,000 policy limits on a case that typically lands under $10,000.

These aren’t outcomes you get from a general AI tool. They’re outcomes you get from a system purpose-built for personal injury law, combined with a partner who is accountable for making it work for your firm.

General-purpose AI will keep getting better. So will EvenUp. The difference is that our improvements are made specifically for PI law, guided by the firms we work with every day, and delivered by a team that doesn’t disappear after you sign.

That’s what it means to be purpose-built. That’s what it means to be a true partner. That’s EvenUp.

PLAASPre-Litigation as a Service

Proactive WorkflowsMake smarter case decisions with AI Playbooks

AI DraftingDraft any legal document in minutes

AI AssistantManage firm performance with critical insights

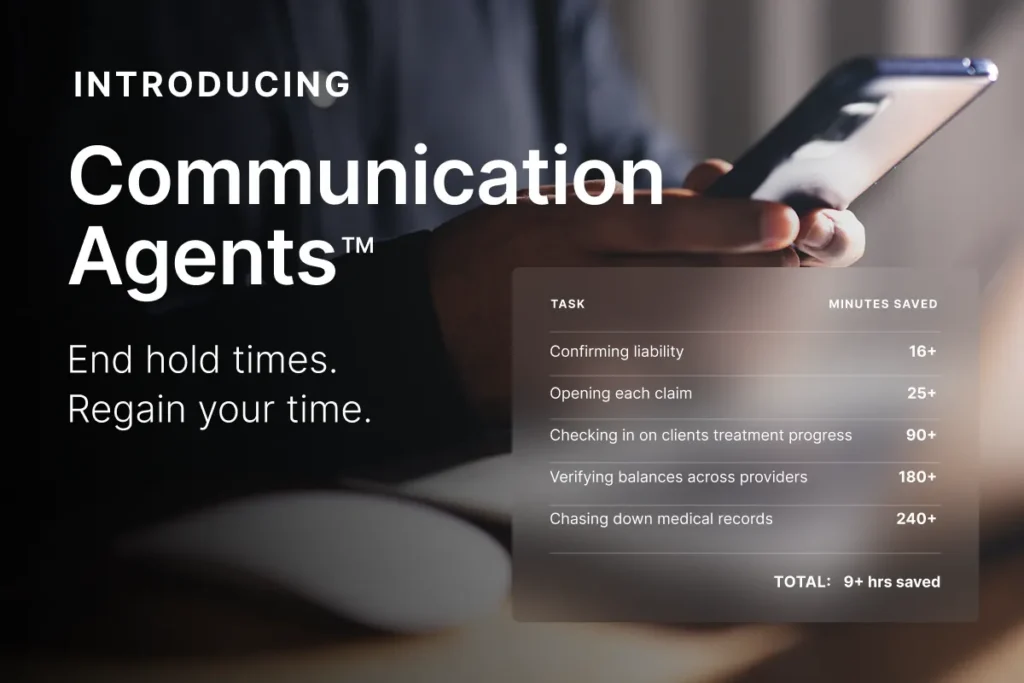

Communication AgentsFaster, more accessible client communication

Executive AnalyticsManage firm performance with critical insights

IntegrationsConnect your casework across platforms